In order to understand cognitive bias, let's first imagine a situation:

You are analyzing the results of a research study for your company's development of a new product.

While analyzing the data, you notice that there are confirmations and oppositions to the theories that emerged at the beginning of the project.

However, when it comes time to present the results to the stakeholders, your colleague only gathers and shows you the information that confirms the theories he had at the beginning of the project. He leaves out a lot of data that contradicts his positioning, but which is just as important as the confirming data.

The stakeholders love the presentation and approve a decision that may not be the best solution.

Now what?

Although it may seem like an ill-intentioned attitude, your colleague may not even realize that he has presented the data in a biased way, just wanting to confirm his own point of view, and having an aversion to contrary information.

As you can see from the example, cognitive bias led to a biased decision, that is, with no impartiality. Therefore, it is important to know how to identify the types of cognitive biases we are subject to and how we can minimize or neutralize them!

So, do you want to know more about these biases and how to avoid them? Read on in the article!

What is cognitive bias?

Cognitive bias is a tendency that all human beings have that affects their evaluations and judgments about other people, situations, or decisions.

It can be said that cognitive bias leads us to a systemic failure in our decision-making. Systemic because it can happen more than once and can be linked to personal values and beliefs, which makes them quite difficult to identify.

This way, it is important to know how to identify what these cognitive biases are so that we can better make our decisions in the most impartial way possible, seeking only the best for the users.

Within the UX world, a lack of impartiality can significantly hinder product development or even research data analysis.

The definition of cognitive bias can be a bit confusing at first. But once we start giving some examples it becomes easier to understand.

To start this list, nothing better than to remember a primary motto in UX:

- You are not your user!

False consensus bias

False consensus is the tendency we have to believe that most people share the same behavior and beliefs as we do.

The concept of false consensus was described in research done in the 1970s by researchers Ross, Greene, and House.

They found, when applying a survey, that the respondents:

- Believed that most people thought and would make the same decision as them when faced with a problem;

- If the person made a different decision, it would be because their personality traits were too extreme, and therefore out of the ordinary.

This shows us how people tend to assume that their behavior in similar to others. And that is not necessarily true.

As an example of false consensus, we can reference the times when we think it is strange that a person does not like chocolate or does not like to travel to the beach on vacation. Well, if I think chocolate is the best food in the world, why would anyone think differently?

We tend to believe that people have the same taste as us. Another classic example is eating pizza with ketchup. People from São Paulo think this is a crime and believe that anyone who does this is a criminal. But the truth is that many people adopt this combination.

Within the UX context, the false consensus is a big trap that must be avoided.

Designers should not take their personal perceptions and beliefs as truth and apply them as being the reality of users. Because they are not! As our motto says, we are not our users.

In other words, you should not expect the user to be using the product in the same way that we Designers would.

How to avoid the false consensus bias?

The best way is to assume that people are not the same and don't think alike. While we can separate and classify groups of people within a certain category, we cannot generalize about behavior or belief.

Therefore, the best way to avoid falling into this cognitive bias is to do research and conduct user testing.

By doing so, we can understand how end users interact and use our product, and we may even be surprised at how different they are from us.

Reading Tip: Tree Testing: How Easily Can Users Find The Information They Need?

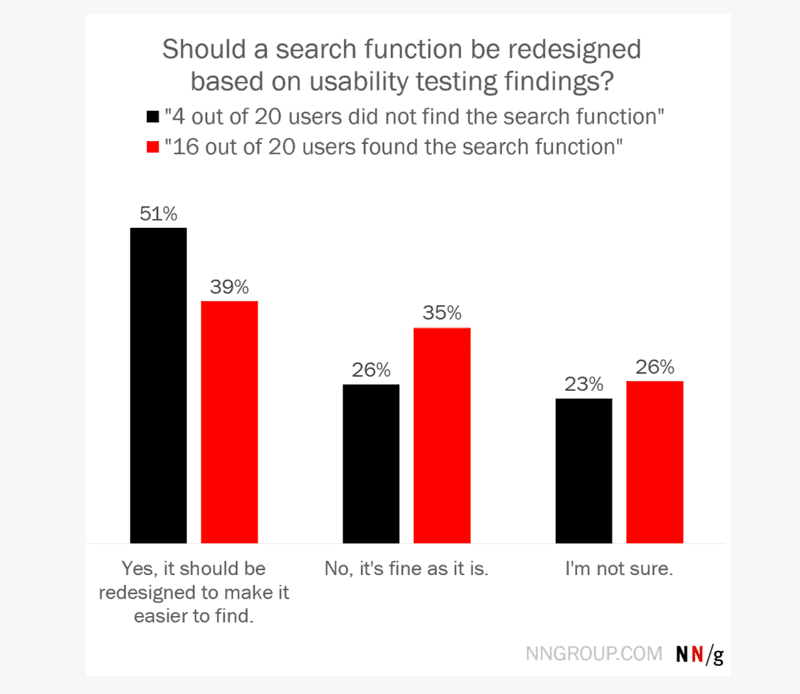

Framing Bias

Cognitive framing bias is the tendency to make a decision based on how information is presented.

Think about a survey that was conducted with your interface users. From the survey, you found that 4 out of 20 people could not find the search button.

Now you have two ways to present this result and then make a decision:

- 4 out of 20 people did not find the search button or;

- 16 out of 20 found the search button.

To exemplify, the above situation was actually used to understand how people would react to each of the statements, even with the same result.

When the statement highlighted that people did not find the search button, that is, the first way (4 out of 20 people did not find it), most respondents said that the best decision would then be redesign.

On the other hand, when the statement highlighted people found the button (16 out of 20 found the button), most respondents said the best decision was to keep the design.

In this way, we can have different decision-making based on the way the results are presented.

How to avoid the cognitive framing bias?

There is no way to eliminate the presentation of results from a survey. They are important and necessary for decision-making.

However, there are some tricks to avoid getting caught up in this cognitive bias:

- Resist the urge to make snap judgments. Calmly analyzing the data and what is being presented will bring out important insights;

- Understand the context and realize whether the data presented are necessary and sufficient for decision-making. Sometimes they are not and more research is needed;

- Try other ways of presenting the results. Think with different perceptions and points of view.

Confirmation bias

Cognitive confirmation bias is the tendency to seek and accept only information that confirms a hypothesis or ideas that we agree with.

In other words, it is not accepting a theory that we believe is wrong, and looking only for information that confirms it.

In this way, confirmation bias contributes to the polarization of thoughts. Where one only reads the information in favor of a certain idea and is not open to accepting or discussing opposing arguments.

In UX, this cognitive bias makes it impossible for a group or person to be open to new information and theories about something or a situation.

For example: in a usability test, the Designer may skew the script so that the results favor the Designer’s assumption.

How to avoid the cognitive confirmation bias?

At first, we may have the feeling that we should be neutral in our thinking. But to avoid confirmation bias, we need to be more humble than neutral.

It's okay for us to support or believe in an idea. But it starts to be harmful when we fail to see the possibility that we are wrong.

Therefore, to avoid confirmation bias we can have attitudes such as:

- listen to opinions that are different from ours and reflect on them;

- look for information that presents arguments contrary to the hypotheses that we believe;

- keep a humble mind and remember that we can be wrong about a theory, and that's okay. The important thing is to learn.

Authority Bias

Authority bias is the tendency to believe and follow "blindly" the opinion or direction of authorities.

In the day to day of the Designer, the following situation can happen: a director or other stakeholder trying to change the path of the project because of personal tastes or opinions. Without scientific basis, but for the simple fact of finding a better or more beautiful color, button, or interaction.

Since the suggestion comes from an authority – a higher hierarchical position – it is possible that people will tend to do it and obey it without questioning. As a result, the whole project can be harmed, influenced by this authority bias.

But as we have seen, we should not move a project based on hunches and personal opinions, everything should be tested with users to get what is the best solution for them in that situation.

However, when there is an authority involved, we tend to believe their views, even if there is no theoretical basis or real evidence to support them.

How to avoid the cognitive authority bias?

We always have to keep in mind that to make a decision we must have research, facts, data, and a theoretical basis.

So in any situation where an authority (director, stakeholder, or Designer) imposes a change on the project without any basis, it's important to:

- Demonstrate the testing and research data;

- Communicate the results clearly;

And not just "blindly" follow the direction or even the order of this authority.

Reading tip: UX Writing: How Words Can Help User Experience

Reputation at risk bias

The cognitive bias of reputation at risk is the tendency a person has to protect their reputation by not exposing themselves or taking risks in different approaches.

The situation may be more common than we think:

A Designer, at the beginning of a project, gives an idea for a product. Everyone likes this idea and they start working on it.

The Designer was not prepared for so much attention and responsibility, after all, he gave the idea. So the Designer begins to fear reputation damage if the idea proves to be flawed.

So the designer begins to "sabotage" the research and analysis. Doing only the basics, without delving too deeply into the data of the positive, and especially the negative points of that idea, for fear that it will prove to be weak and risk the reputation.

Therefore, reputation bias is about the fear of taking risks and putting your reputation at risk. And from that, working with simple, easy, and comfortable solutions.

How to avoid the cognitive reputation bias?

The cognitive reputation bias is closely linked to people's psychological safety.

Thus, the way to avoid this bias is to work in a safe environment so that people can take risks and make mistakes without fear of reprisals.

It's important to celebrate the successes, but it's also essential to celebrate the attempts, the failures, and what we have learned from them.

In addition, it's interesting to show which lessons have been learned from the ideas, theories, and attempts.

Groupthink bias

When we work in a group, it is quite common to try to maintain harmony and a good atmosphere among the group members.

For this reason, on certain occasions, it is common for the group to accept some decisions in order to avoid internal conflicts.

This situation is known as a cognitive bias in group thinking.

How to avoid the cognitive groupthink bias?

In order to avoid this cognitive bias, we have to ward off some types of behavior, and also the non-conflict bias:

- avoid saying your preferences in front of other group members;

- avoid saying your expectations about something in the project;

- give some people in the group the freedom to confront your ideas without feeling uncomfortable.

Reading tip: Why Are Balanced Teams So Important To UX?

Sunk Cost Fallacy

The sunk cost fallacy is a cognitive bias based on people's fear of losing something – aversion to loss.

Imagine a classic situation: a young man has entered a 4-year business school. At the beginning of the third year, he realizes that the course is not what he expected and feels frustrated. At the same time, he thinks that it is not worth giving up and switching to another career. Because he has already invested so much time and so much money, he decides to finish college.

In this way, the fallacy of lost cost is the tendency for us to make bad decisions based on the investment – of time and money – already made and that is unrecoverable.

In the same way, it can happen in a project. Where at a given moment, it becomes clear that the product under development will not meet the needs of the end user. But at this moment, the Designers think of all the time and money already invested, and so they continue with the project even knowing that it is already a lost cause.

However, not recognizing this fallacy will only bring more losses and more frustration.

Therefore, it is necessary to know how to avoid it.

How to avoid the sunk cost fallacy?

There are some ways to avoid the sunk cost fallacy:

- Always have the macro view of the project. Be clear about what the objectives to be achieved are. To do this, always have them in sight, on a board, or on post-its on the wall. This will make sure that you always remember them when making decisions;

- Control your investments. Before starting the project, it is important to know your investment projection and your earnings prediction. Always keep these numbers in mind and always update them. This way it’s easier to identify when some limit has been exceeded and when it’s better not to continue with the project;

- Don't be afraid of making mistakes. Keep in mind that mistakes happen and that we need to learn from them. Take calculated risks to avoid big losses, but know when to stop.

Survival Bias

The cognitive bias of survival is perhaps one of the most interesting and surprising.

To exemplify, let's share a war case:

In order to improve their squadron, scientists analyzed every plane that returned from the battlefields. They then realized that all the planes had holes from gunshots in their wings and tails. They quickly arranged to have these parts of the planes strengthened to better protect them.

Although this seems like the most obvious solution, the scientists did not take into consideration that if the planes returned to base, it means that the attacks had no effect.

In fact, the scientists should have paid attention to the planes that did not return, since they were hit in vital parts. Or at least have strengthened the parts of the planes that returned and were not hit, since they show that the planes only returned because they were not hit in these vital parts.

So the cognitive survival bias is the tendency for us to look only at what survived (positive) and not the other way around.

Bringing it into the world of UX, survival bias happens when more focus is given to active customers who are satisfied with the product. When, in fact, you should understand why those who are no longer users were not satisfied.

By doing your research only with active users of your digital product, you are only looking at part of your market and a biased part. For, it is the satisfied users, who have stayed. So, you won't be aware of all the flaws and you won't pay attention to what can be done to increase your user base.

How to avoid the cognitive survival bias?

To avoid survivorship bias, you need to build the habit of viewing data in a comprehensive way.

We tend to pay attention only to what is working because it's probably what is most apparent.

Therefore, in testing and research results, it's more important to understand why something is not working or not working, rather than the other way around.

In addition, it is also very important to survey and research people who are not yet users of your products, or who are no longer users. This way you can collect information and insights about why people abandon your product or do not even consider it.

So what do you need to do to make people who are not liking your product, start liking it?

Reading tip: Product Manager: Business, Technology, and User Experience

Affinity Bias

The cognitive affinity bias is the tendency we have to relate to people who are similar to us – physically, by life experiences, etc.

This tendency is even obvious because it brings us comfort.

However, when talking about UX projects, we have to remember the motto from the beginning of this article: "you are not your user". So, you have to think about the profile of the person who will use the product you are developing.

From that, it's important to build a diverse team, with different types of people.

This way, with different people on your team, your ideas and your perception will not be bound to affinity bias. After all, you will have a team with other visions about the solutions and the user.

How to avoid the cognitive affinity bias?

To go against affinity cognitive bias, you need to deconstruct certain thoughts and concepts.

The fact that we feel more comfortable with people like us can, in some cases, lead to the creation of prejudices.

Therefore, it is necessary to have an open mind and get rid of these prejudices. When it comes to putting together a team, give preference to people who think differently and who have had different experiences than you.

A diverse team has more visions and more creativity when it comes to thinking of solutions for the user.

Cognitive bias is normal, don't be afraid

At first, it may seem that having these cognitive biases is a bad thing and that we have to get rid of them all quickly.

Although avoiding them is quite important and interesting for our lives and our projects, it's nearly impossible to get rid of them for good.

Biases are normal for people, because of their life experience, their values, and their goals.

But more important than trying to get rid of them – which is nearly impossible – is to know that they exist and understand how they manifest and how it is possible to neutralize them.

As we’ve seen, letting ourselves be led by some cognitive bias can cause us to make bad decisions.

So instead of thinking about getting rid of them, think about which biases are the most common in your daily life and how to avoid being influenced by them all the time.